Level-of-Detail Modal Analysis for Real-time Sound Synthesis

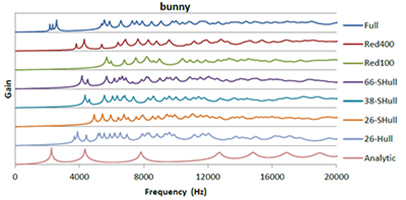

Modal sound synthesis is a promising approach for real-time physically-based sound synthesis. A modal analysis is used to compute characteristic vibration modes from the geometry and material properties of scene objects. These modes allow an efficient sound synthesis at run-time, but the analysis is computationally expensive and thus typically computed in a pre-processing step. In interactive applications, however, objects may be created or modified at run-time. Unless the new shapes are known upfront, the modal data cannot be pre-computed and thus a modal analysis has to be performed at run-time. In this paper, we present a system to compute modal sound data at run-time for interactive applications. We evaluate the computational requirements of the modal analysis to determine the computation time for objects of different complexity. Based on these limits, we propose using different levels-of-detail for the modal analysis, using different geometric approximations that trade speed for accuracy, and evaluate the errors introduced by lower-resolution results. Additionally, we present an asynchronous architecture to distribute and prioritize modal analysis computations.

@inproceedings {vriphys.20151335,

booktitle = {Workshop on Virtual Reality Interaction and Physical Simulation},

editor = {Fabrice Jaillet and Florence Zara and Gabriel Zachmann},

title = {{Level-of-Detail Modal Analysis for Real-time Sound Synthesis}},

author = {Rausch, Dominik and Hentschel, Bernd and Kuhlen, Torsten W.},

year = {2015},

publisher = {The Eurographics Association},

ISBN = {978-3-905674-98-9},

DOI = {10.2312/vriphys.20151335}

pages = {61--70}

}