Services

Besides conducting basic as well as application-oriented research, our group's second focus is providing services to support institutes of RWTH Aachen University as well as external cooperation partners in their research projects. To this end, some colleagues joined forces in the team "Infrastructure - Software - Service" (ISS), handling these service activities.

We provide crucial methodological and infrastructural components. Furthermore, we develop complex VR applications and solutions tailored to our partners' own specific requirements and data. More information can be found here.

If you are interested in cooperation or in using our VR infrastructure, please contact us via e-mail: supportteam@vr.rwth-aachen.de

Examples of Service Activities

- AeroViz: Immersive Visualization of Aerosols Spreading in Classrooms

- Immersive Visualization of Cytoskeletons

- Immersive Flow Visualization

- Navigation in Multibody Simulation Data

- VR-based Design Optimization of Cost-Efficient Electric Vehicles

- Visualizing the Novel Fuel Synthesis Inside a Virtual Biorefinery

- Agents as Peers at Work: On the Effect of the Presence of Agents, Arousal and Heterogeneity in Dynamic Tournaments

- Virtual Sketching in Immersive Virtual Environments

- Development of a Render for Point-Clouds from Laser Scanning

A key aspect in efficiently containing pandemics such as COVID-19 is identifying the main transmission mechanisms. Research quickly proved that aerosol-borne viruses are responsible for a significant proportion of infections. Following this finding, air cleaners to filter viruses in closed offices and classrooms were discussed as possible solutions in order to reduce the risk of airborne infections. However, since the upfront costs are considerable, especially for public office buildings or schools, the efficiency of such a solution needed to be investigated first.

The Institute for Energy Efficient Buildings and Indoor Climate of the E.ON Energy Research Center has supported this discussion with objective scientific data. To this end, they developed a model that simulates the dispersion of aerosols in a classroom. To support our colleagues, we have developed the scientific visualization AeroViz. It visualizes the simulation data by showing the concentration of aerosol-bound SARS-CoV-2 particles in a realistic and easily comprehensible immersive environment. For this purpose, we modeled a detailed classroom in which the dispersion of aerosol clouds of the teaching staff and the students are displayed as iso-surfaces (see left figure). The respective aerosol concentration for different time points had to be extracted from the simulation data to render the aerosol clouds. The final visualization with AreoViz allows users to examine the temporal as well as spatial dispersion of aerosols for each person in the classroom. Using the aixCAVE of the RWTH Aachen allows a collaborative view of aerosol dispersion and the possibility of comprehensible knowledge transfer, making it a powerful tool for educating the public, as shown in the right figure.

|

|

| Left: The aerosol spreading was computed via a complex fluid simulation based on a simplified representation of a typical classroom equipped with air filters. The teacher and pupils are shown as wooden mannequins with their respectively emitted aerosol concentration represented as a green cloud. Right: A scientist explains the mechanics of aerosol spreading to children using AeroViz in the aixCAVE. | |

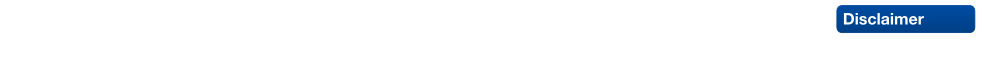

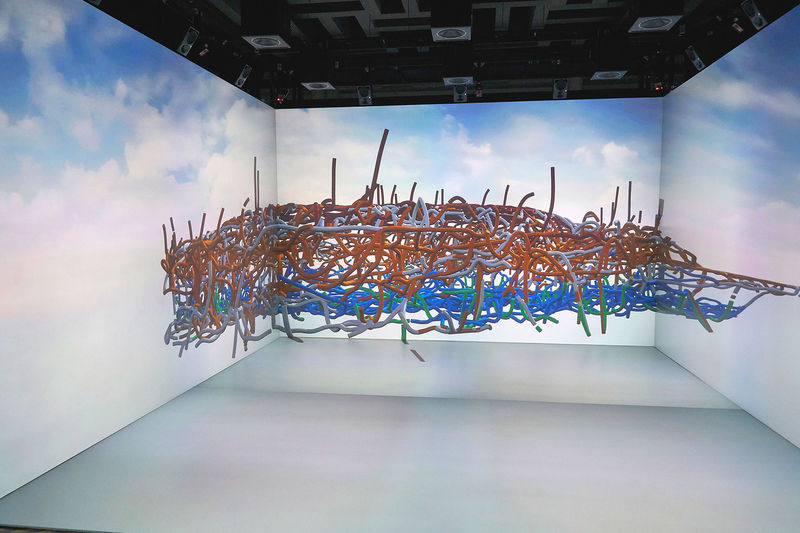

The Institute of Molecular and Cellular Anatomy (MOCA) is currently investigating intricacies of cytoskeletons, the dynamic networks of interlinking protein filaments present in the cytoplasm of cells. The researchers are particular interested in the role of the keratin cytoskeleton for the homeostasis and maintenance of surface-lining epithelia. Gaining further insight here will support the understanding and treatment of various diseases ranging from genetically transmitted skin diseases to carcinogenesis.

To support our colleagues at the MOCA, we have developed an immersive visualization of the filament network bringing the nm resolution level to the macroscale. The simulation, see more details here, thus provides novel insights into the network architecture, allows assessing the quality of the cytoskeleton's vectorization as well as comparing the cytoskeletons of different cell states. Additionally, our VR-based application allows to interactively classify the individual filaments based on a color coding: bottom (green), nuclear (blue), top (red), lateral (yellow), outside, e.g., of neighboring cells (grey).

|

|

| Overview of a cytoskeleton in the aixCAVE (left) with users evaluating the filament classification (right). (More images can be found on our partner's website.) |

|

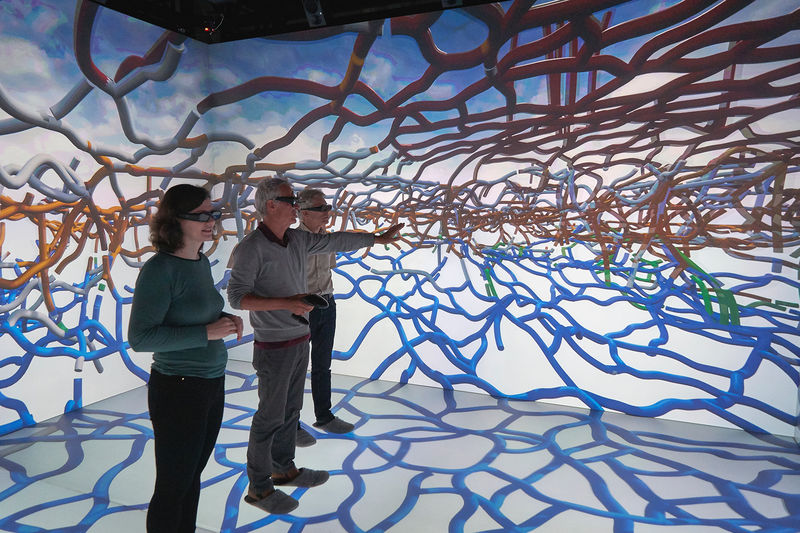

The Institute for Technical Combustion at RWTH Aachen University (ITV) is currently investigating early flame kernel development for optimization of fuel combustion processes in automobile and aircraft engines. The ITV scientists are particularly interested in the transition from laminar to turbulent flow, which occurs in the early phase of the combustion process. The flame is sensitive and its development over time largely determines the efficiency of energy conversion and pollutant emissions.

To support our colleagues at the ITV, we have developed an immersive, time-variant visualization of their flame kernel development dataset. The flame kernel is presented as temperature isosurfaces, with the user being provided the ability to interact with the dataset and its surroundings in a virtual laboratory setting. The application supports head-mounted displays for single-user and the aixCAVE for multi-user analysis and visualization.

|

| Immersive visualization of a flame kernel. The color of the isosurface indicates zones of high (red) and low (blue) heat growth. |

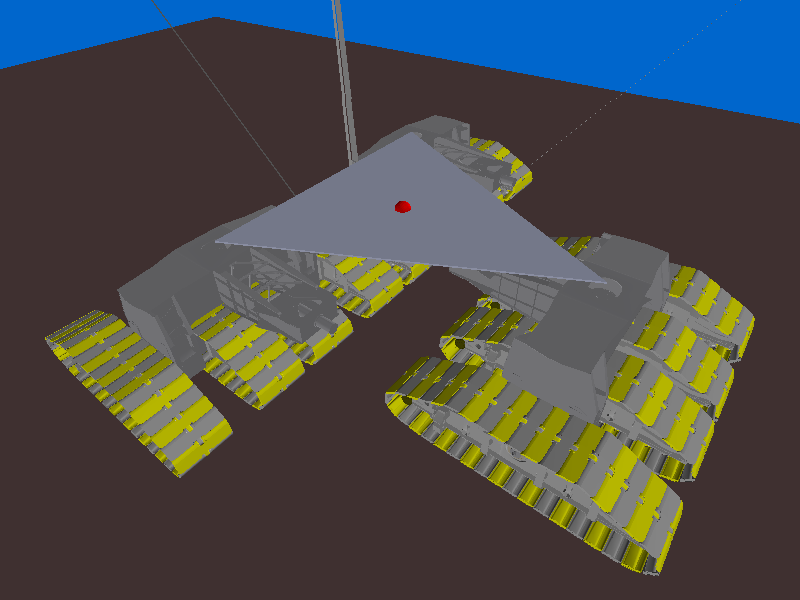

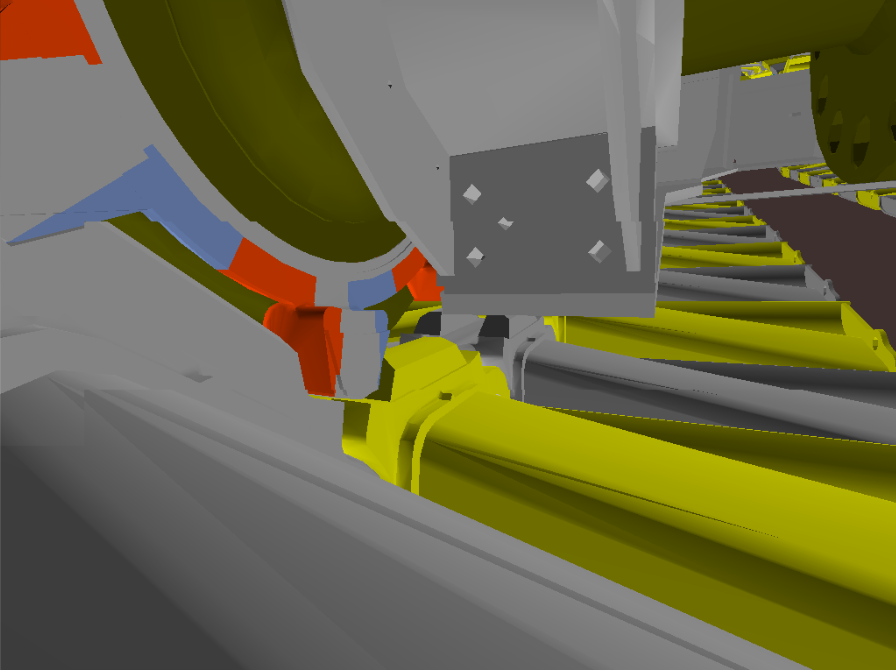

| The Simertis GmbH provides service and software solutions for multibody-simulations offering a comprehensive spectrum of solutions in the fields of engineering, system analysis, consulting, software development and software sales. In order to provide a VR-experience of the multibody simulation data we teamed up: |  |

The real time aspect required for an appropriate visualization can be achieved by focusing on the animation information instead of using classical geometry flip-book approaches as switching between pre-computed time steps. By encoding the trajectories of the objects we can later interpolate between them to produce smoother movements that are in phase with the rendering process even at lower frame rates. This provides several advantages as the possibility to exactly navigate through the animation to a specific moment in time and get the exact positions and stances of all rigid bodies involved in the simulation.

|

|

| Overview of an undercarriage of a bucket wheel excavator with twelve tracks (left) and detail view on the mechanics of one track (right). | |

The e.GO Mobile AG, headquartered on RWTH Aachen Campus, develops and constructs cost-efficient electric vehicles for short-distance travel. Since March 2016 a VR-based design optimization is integrated into the highly iterative development processes used by production researcher and e.GO Mobile CEO Professor Günther Schuh and his team.

Enlarging the methodological toolbox of the development processes by means of Virtual Reality becomes possible through a cooperation between the e.GO Mobile AG and our research and service group. Visualizing the planned vehicles in the highly immersive virtual environment of the aixCAVE at RWTH Aachen University enables the researchers to evaluate the vehicles’ design and discuss optimization options.

Currently, two different use cases are integrated into this design evaluation. First, the e.GO Mobile AG team is able to investigate the exterior of the designed vehicle, shown in the left figure. By physically walking around the life-sized virtual prototype of the current design, they can see and discuss every single detail of the outer body of the vehicle, e.g., the disposal of the various headlights and the proportions of each part in relation to the whole vehicle. If needed, the visibility state of designated parts of the vehicle’s exterior can even be manually switched, to focus on a single detail. Second, the team can gain more insight into their virtual vehicle. As shown in the right figure, they can easily take the driver’s seated position. By this, the team is enabled to review the interior of the car and – maybe even more important – check the driver’s field of vision in all direction. Performing both use cases during the design optimization discussion phases ensures that the final vehicle has not only an optimal design but is also suitable to drive safely in crowded cities like Aachen or on highways, as drivers can access an adequate portion of the road to quickly see other road users as well as pedestrians. A brief insight is given by a short video entitled e.GO@aixCAVE.

|

|

| Left: Visualization of the virtual, electric vehicle inside the aixCAVE. Right: e.GO Mobile CEO Professor Günther Schuh takes a seat in the virtual vehicle during a design optimization discussion phase.. | |

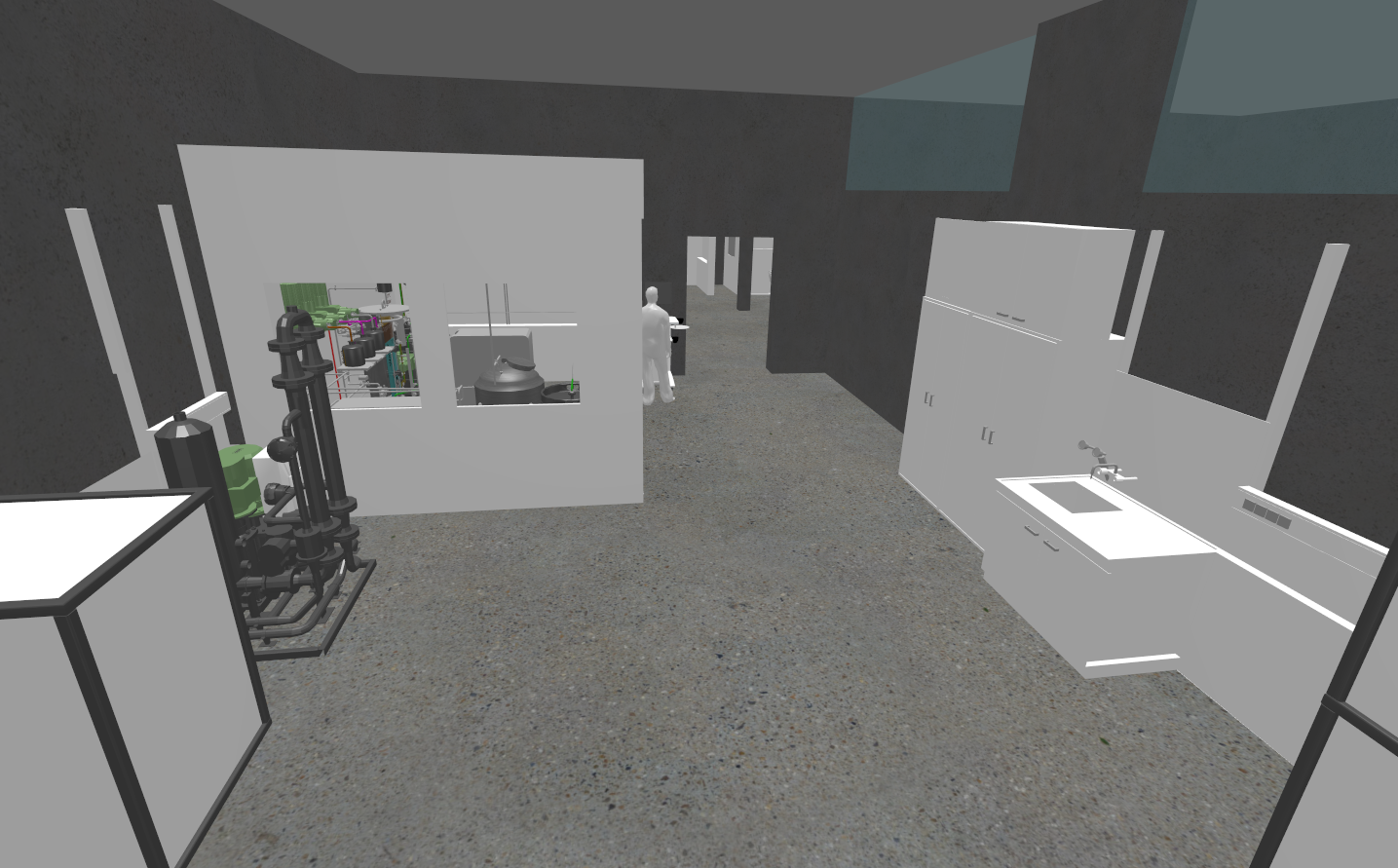

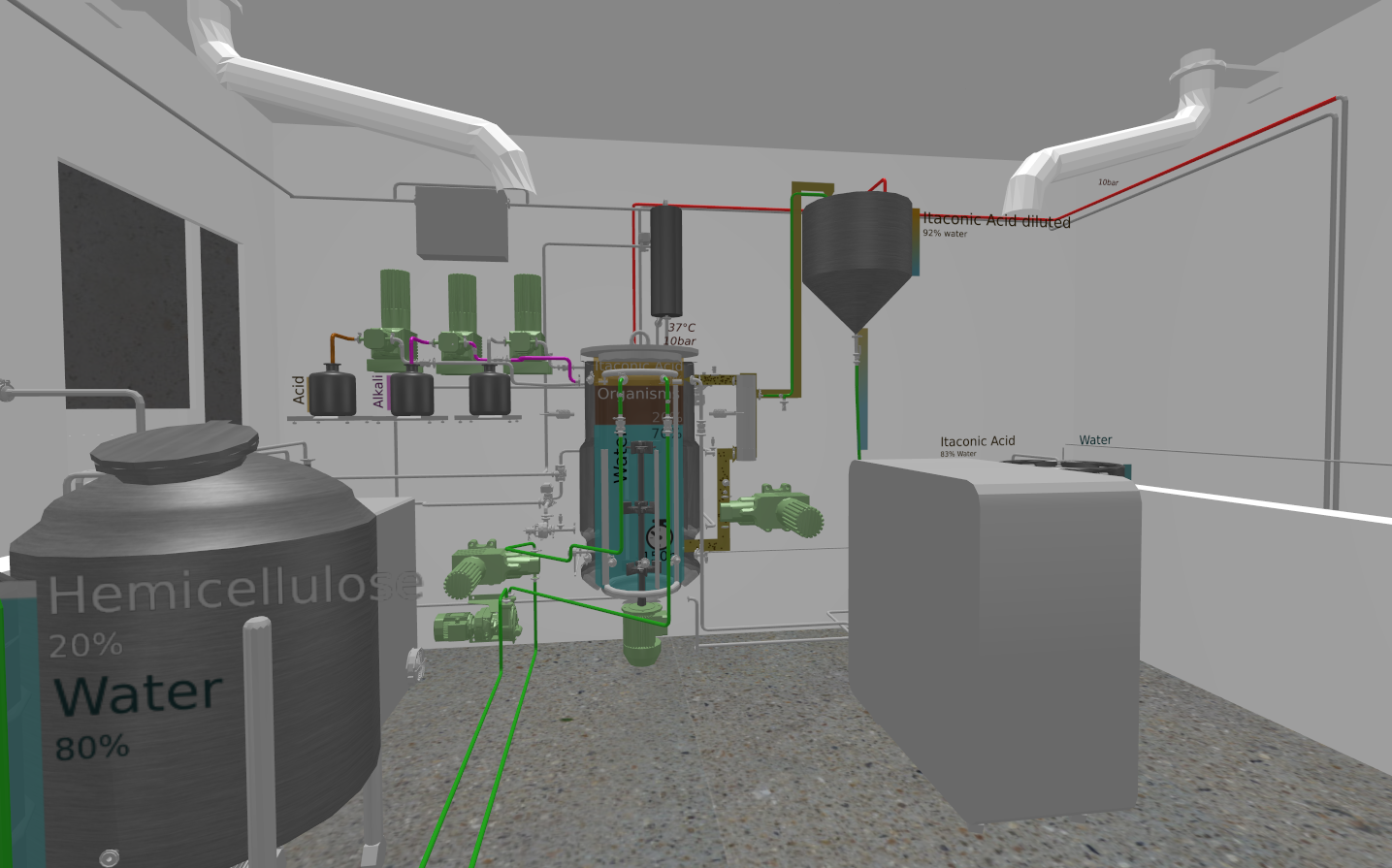

Due to the rising energy demand and the limited availability of fossil energy resources, the Cluster of Excellence: Tailor-Made Fuels from Biomass (CoE TMFB) focuses on developing new alternative fuels from biomass, which will not be competing with the food chain and thus raise new standards of sustainability. In addition to molecular fuel design, the CoE developed novel process concepts for the synthesis of the platform chemical itaconic acid utilizing biologic waste such as wood.

The Aachener Verfahrenstechnik (AVT), one of the members of the CoE, currently builds a modular biorefinery for research purposes at technical scale. It will be located within the Center for Next Generation Processes and Products (NGP2) at Campus Melaten in Aachen. In a close collaboration with AVT, we designed a Virtual Reality (VR) walkthrough displaying the prototype of this new biorefinery. Utilizing Inside, a VR application developed in our research and service group, we scripted a scenario including not only the biorefinery’s building complex but all the important devices in its interior. In order to allow an adequate exploratory navigation through the biorefinery, users are able to visit all the points of interests by choosing pre-defined navigation paths. Additionally, it is always possible to navigate freely through the building. By this, the resulting VR application has three benefits. First, it proves feasibility of the planned apparatuses for operators at AVT before equipment is ordered. Second, it provides insight into the overall process concept with its individual unit operations and process steps for third party. Third, it highlights the mechanisms and challenges, using textual descriptions at the reactors and separation equipment. Thus, the user can easily understand the involved mechanisms and challenges as well as the basic concepts utilized in the production route design and track different chemical compounds from their origin to the product.

We teamed up with AVT and the Division for Technology Transfer to represent RWTH Aachen on the Hannover Messe 2016, presenting the biorefinery on a semi-immersive display. The demonstration of the synthesis approach received good resonance for both the technique itself as well as for the application. Since then, the application was shown at multiple occasions, e.g., at the annual meeting of the ProcessNet, a process engineering initiative supported by DECHEMA, the expert network for chemical engineering and biotechnology in Germany.

|

|

| Left: Production apparatuses inside one room of the biorefinery. Right: A fermentation reactor producing itaconic acid, with textual descriptions to visualize the intrinsic processes. | |

Understanding peer effects is of utmost importance for the design of work organizations. Does the presence and the productivity of a peer have an effect on a subject’s performance in a competitive situation? Do intermediate scores during work affect incentive effects? The answers to these questions will have important implication for incentive provision in firms.

With this interdisciplinary project, kindly funded by the Research Area Managerial and Organization Economics, our partners from Experimental Economics, the Chair of Organization as well as the Chair of Human Resource Management and Personnel Economics at RWTH Aachen University and we aim to understand the determinants of peer effects. For this, we designed an economic real effort experiment in the aixCAVE at RWTH Aachen University giving a high degree of control over the complete experimental setting. When a subject enters the aixCAVE, she is immersed in a virtual production hall. The subject then performs a real-effort sorting task at a virtual conveyor belt. To explore peer effects in a controllable way, we embedded a virtual, computer-controlled, male agent as co-worker. He independently works in the same work location, within the subject’s field of view, performing an identical sorting-task. However, his productivity can be manipulated. By this, we are able to observe non-confounded peer effects, as the reflection problem - who influences whom - does not occur.

After two studies with promising results were conducted in January and July 2015, the initial setup was extended: instead of choosing only between two distinct and pre-defined behaviors for the co-worker, we are now able to more fluently change the agent’s behavior and thus his productivity. By means of this, we plan to evaluate the incentive effect of competition: the subject receives a higher payment if she performs better than her opponent, the co-worker. In order to provide an intense competition, the agent’s productivity will be adjusted to the subject’s productivity. Furthermore, the subject will be able to continuously track her and the agent’s productivity on a virtual monitor. The study will be conducted in November 2016.

|

|

| Users performing a real-effort sorting task in the aixCAVE, seeing a virtual co-worker independently fulfilling the identical task. | |

Traditionally, sketches are drawn by hand on a flat surface, like paper. By this, the artist, designer or architect can directly incorporate ideas and impressions into the drawing. This process can be on the one hand a methodological way of thinking – a rational process following a concrete function – or can be on the other hand an undetermined, experimental act of making and testing. Normally it is restricted to 2D depictions of a 3D world. To better capture this three-dimensionality and to integrate this opened act of reflecting by using body’s knowledge, architecture students have started creating virtual projects on computer systems. The resulting 3D draft enables the designer herself and the viewers to get a spatial impression of the work. The first drafts, volumes and relations can be experienced, compared and become controllable by movements in the space. Moreover, a physical and tangible correspondence between content and process can take place during the creative phase. However, to maintain the creativity in the drawing process, a natural and intuitive interface is required.

To this end, a Virtual Reality-based tool for sketching in immersive virtual environments was developed as part of a diploma thesis in our research and service group and later on extended in an interdisciplinary cooperation with the Department of Visual Design (Prof. Thomas H. Schmitz, Hannah Groninger). It incorporates the advantages of classical open, undetermined hand-drawn sketches and 3D computer drafts: the drawer is immersed in her draft or model inside the aixCAVE at RWTH Aachen. She can freely move and look around in the virtual scenery and sketch 3D strokes directly into the environment using tracked input devices.

In facilitating the artistic creativity, the sketching tool provides several options to the artist, designer or architect, starting with different brush forms with adjustable color and texture. Further functions allow drawing straight lines, directly creating 3D shapes like spheres and boxes and session management providing undo, saving as well as a replay of the one- or two-handed sketching process. Also, 3D model files from 3D modeling software, like Blender or 3DS Max, can be used as scenery, enabling the drawers to sketch directly inside an already existing model, to add features or highlight parts of the work. After sketching the work can be converted into a 3D model that can be opened and edited with most modeling software.

The tool is currently used in the module “Virtual Sketching” for Master’s Degree-Students in the fields of architecture and urban planning at RWTH Aachen. Some results can he found here and here.

Teaching Award Project 2017!

|

|

| Two students immersed in the aixCAVE, designing artworks using the Virtual Sketching tool. | |

Laser scanning is a common method to rapidly capture shapes of single objects, complete buildings and even widespread landscapes. Besides application areas such as archaeology, preservation of cultural heritage or architecture, the police all over the world use laser scans to preserve crime scenes for a subsequent exploration and analysis. Currently, police officers and investigators visualize the resulting point-clouds on normal desktop systems to execute their analyses. However, being immersed in the data is sometimes beneficial, e.g., to investigate the crime by re-enacting it with the victims in a safe environment. Thus, we cooperated with the police in North Rhine-Westphalia in order to develop a Virtual Reality application for rendering the point-clouds of laser scans in real-time inside the immersive virtual environment of the aixCAVE at RWTH Aachen University.

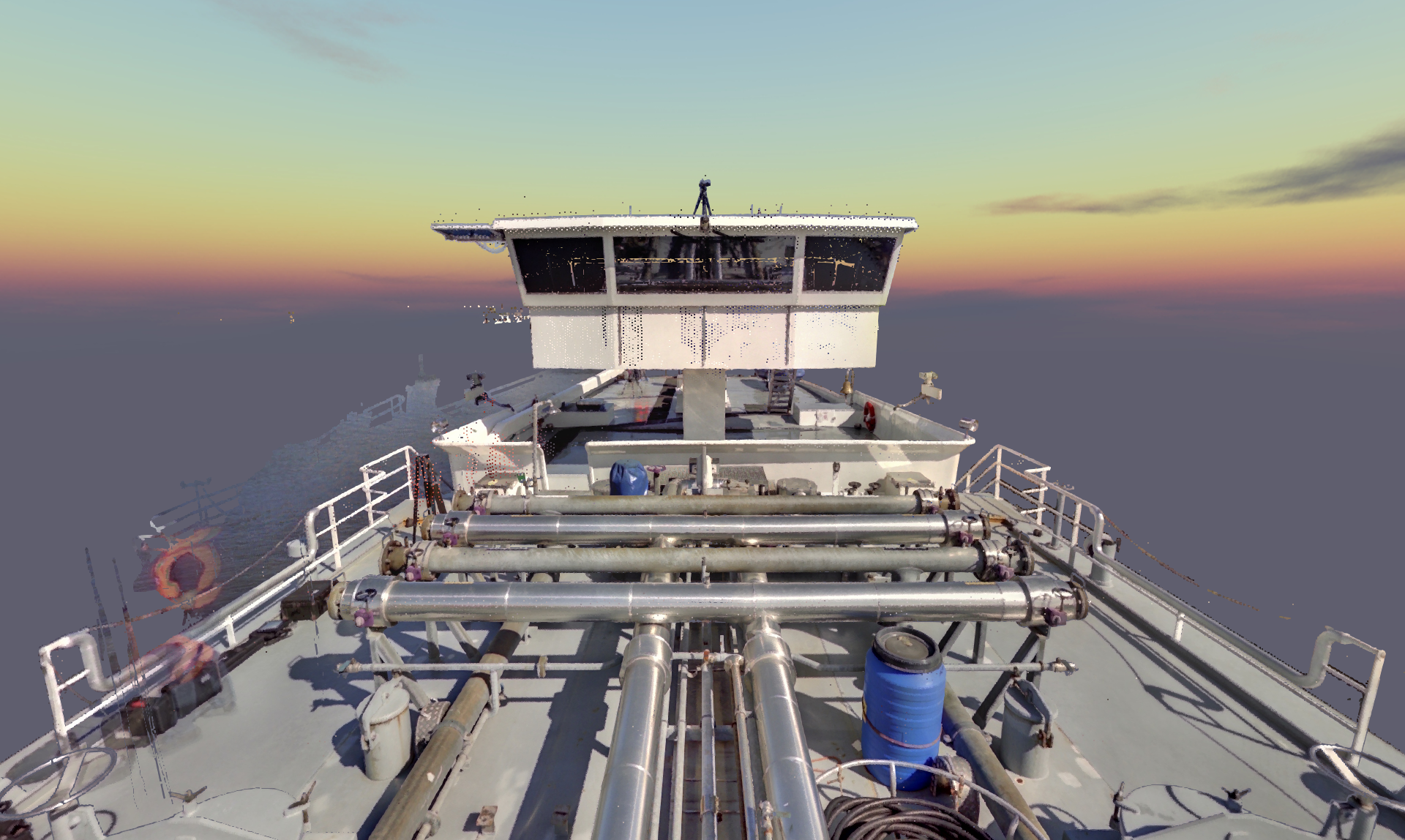

The resulting application was presented during the 13th International Police Workshop Photogrammetry / Laser Scanning, which took place from July 11th to 15th, 2016. During an excursion to the Virtual Reality Lab of the RWTH Aachen, the participants, about 100 specialists for crime scene and traffic accident documentation and damage visualization, police officers, forensic specialists, scientists, surveyors and coroners from 12 nations, were immersed in a point-cloud of a well-known scenario. The goal of this excursion was to weigh up the advantageous and disadvantageous of enlarging the methodological toolbox of crime and accident scene visualization by means of Virtual Reality. During a practical exercise in the beginning of the workshop, the participants had to measure and document the simulated disaster of a tanker vessel explosion at the Neuss Harbor via different laser scanners from ashore, on and below the ship’s deck, from the air and from underwater. All the resulting laser scans were later combined into one single point-cloud and finally visualized with our point-cloud renderer. By this, the participants could not only evaluate the VR experience of the tanker scenario, but also the quick and uncomplicated process from scanning till rendering.

The VR-based crime scene inspection was rated well by the participants, giving new opportunities to crime/accident scene visualization.

|

|

| Left: Participants of the police workshop immersed in the aixCAVE. Right: A screenshot of the application with the point-cloud-data of a tanker vessel, scanned during the workshop. | |