Profile

|

Prof. Dr. Tom Vierjahn |

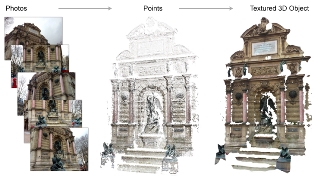

Dissertation: Online Surface Reconstruction From Unorganized Point Clouds With Integrated Texture Mapping, Westfälische Wilhelmsuniversität Münster, 2015

Publications

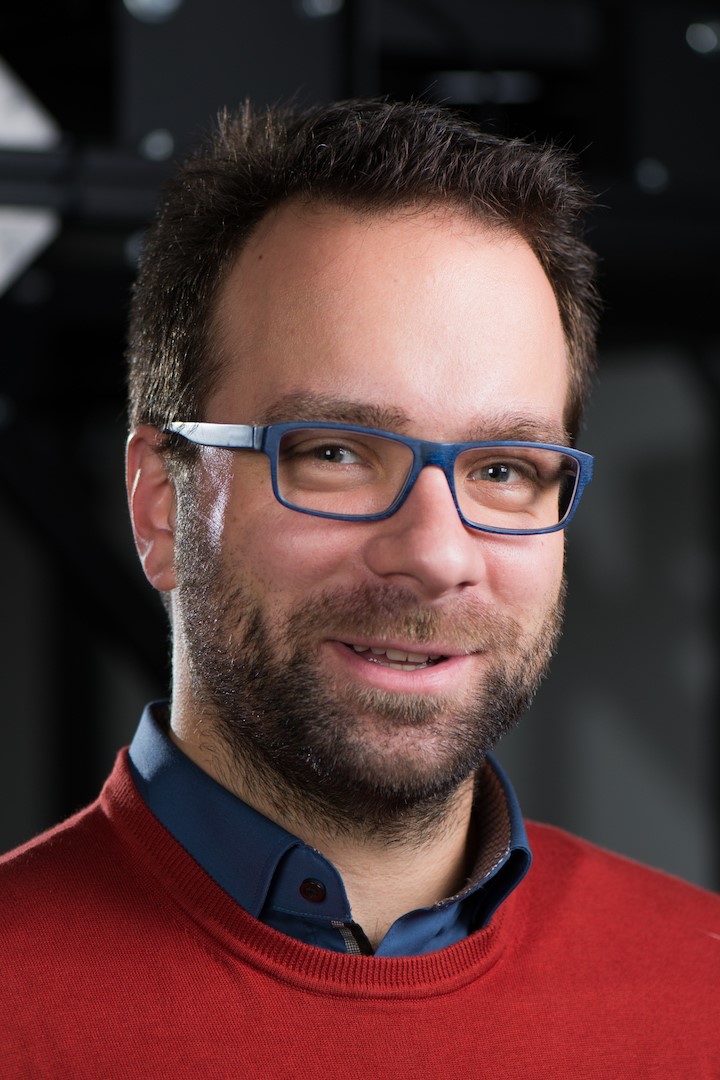

Social VR: How Personal Space is Affected by Virtual Agents’ Emotions

Personal space (PS), the flexible protective zone maintained around oneself, is a key element of everyday social interactions. It, e.g., affects people's interpersonal distance and is thus largely involved when navigating through social environments. However, the PS is regulated dynamically, its size depends on numerous social and personal characteristics and its violation evokes different levels of discomfort and physiological arousal. Thus, gaining more insight into this phenomenon is important.

We contribute to the PS investigations by presenting the results of a controlled experiment in a CAVE, focusing on German males in the age of 18 to 30 years. The PS preferences of 27 participants have been sampled while they were approached by either a single embodied, computer-controlled virtual agent (VA) or by a group of three VAs. In order to investigate the influence of a VA's emotions, we altered their facial expression between angry and happy. Our results indicate that the emotion as well as the number of VAs approaching influence the PS: larger distances are chosen to angry VAs compared to happy ones; single VAs are allowed closer compared to the group. Thus, our study is a foundation for social and behavioral studies investigating PS preferences.

@InProceedings{Boensch2018c,

author = {Andrea B\"{o}nsch and Sina Radke and Heiko Overath and Laura M. Asch\'{e} and Jonathan Wendt and Tom Vierjahn and Ute Habel and Torsten W. Kuhlen},

title = {{Social VR: How Personal Space is Affected by Virtual Agents’ Emotions}},

booktitle = {Proceedings of the IEEE Conference on Virtual Reality and 3D User Interfaces (VR) 2018},

year = {2018}

}

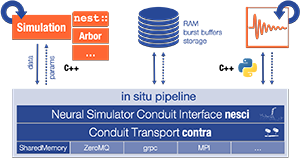

Streaming Live Neuronal Simulation Data into Visualization and Analysis

Neuroscientists want to inspect the data their simulations are producing while these are still running. This will on the one hand save them time waiting for results and therefore insight. On the other, it will allow for more efficient use of CPU time if the simulations are being run on supercomputers. If they had access to the data being generated, neuroscientists could monitor it and take counter-actions, e.g., parameter adjustments, should the simulation deviate too much from in-vivo observations or get stuck.

As a first step toward this goal, we devise an in situ pipeline tailored to the neuroscientific use case. It is capable of recording and transferring simulation data to an analysis/visualization process, while the simulation is still running. The developed libraries are made publicly available as open source projects. We provide a proof-of-concept integration, coupling the neuronal simulator NEST to basic 2D and 3D visualization.

@InProceedings{10.1007/978-3-030-02465-9_18,

author="Oehrl, Simon

and M{\"u}ller, Jan

and Schnathmeier, Jan

and Eppler, Jochen Martin

and Peyser, Alexander

and Plesser, Hans Ekkehard

and Weyers, Benjamin

and Hentschel, Bernd

and Kuhlen, Torsten W.

and Vierjahn, Tom",

editor="Yokota, Rio

and Weiland, Mich{\`e}le

and Shalf, John

and Alam, Sadaf",

title="Streaming Live Neuronal Simulation Data into Visualization and Analysis",

booktitle="High Performance Computing",

year="2018",

publisher="Springer International Publishing",

address="Cham",

pages="258--272",

abstract="Neuroscientists want to inspect the data their simulations are producing while these are still running. This will on the one hand save them time waiting for results and therefore insight. On the other, it will allow for more efficient use of CPU time if the simulations are being run on supercomputers. If they had access to the data being generated, neuroscientists could monitor it and take counter-actions, e.g., parameter adjustments, should the simulation deviate too much from in-vivo observations or get stuck.",

isbn="978-3-030-02465-9"

}

Does the Directivity of a Virtual Agent’s Speech Influence the Perceived Social Presence?

When interacting and communicating with virtual agents in immersive environments, the agents’ behavior should be believable and authentic. Thereby, one important aspect is a convincing auralizations of their speech. In this work-in progress paper a study design to evaluate the effect of adding directivity to speech sound source on the perceived social presence of a virtual agent is presented. Therefore, we describe the study design and discuss first results of a prestudy as well as consequential improvements of the design.

» Show BibTeX

@InProceedings{Boensch2018b,

author = {Jonathan Wendt and Benjamin Weyers and Andrea B\"{o}nsch and Jonas Stienen and Tom Vierjahn and Michael Vorländer and Torsten W. Kuhlen },

title = {{Does the Directivity of a Virtual Agent’s Speech Influence the Perceived Social Presence?}},

booktitle = {IEEE Virtual Humans and Crowds for Immersive Environments (VHCIE)},

year = {2018}

}

Towards Understanding the Influence of a Virtual Agent’s Emotional Expression on Personal Space

The concept of personal space is a key element of social interactions. As such, it is a recurring subject of investigations in the context of research on proxemics. Using virtual-reality-based experiments, we contribute to this area by evaluating the direct effects of emotional expressions of an approaching virtual agent on an individual’s behavioral and physiological responses. As a pilot study focusing on the emotion expressed solely by facial expressions gave promising results, we now present a study design to gain more insight.

@InProceedings{Boensch2018b,

author = {Andrea B\"{o}nsch and Sina Radke and Jonathan Wendt and Tom Vierjahn and Ute Habel and Torsten W. Kuhlen},

title = {{Towards Understanding the Influence of a Virtual Agent’s Emotional Expression on Personal Space}},

booktitle = {IEEE Virtual Humans and Crowds for Immersive Environments (VHCIE)},

year = {2018}

}

Talk: Streaming Live Neuronal Simulation Data into Visualization and Analysis

Being able to inspect neuronal network simulations while they are running provides new research strategies to neuroscientists as it enables them to perform actions like parameter adjustments in case the simulation performs unexpectedly. This can also save compute resources when such simulations are run on large supercomputers as errors can be detected and corrected earlier saving valuable compute time. This talk presents a prototypical pipeline that enables in-situ analysis and visualization of running simulations.

Talk: Influence of Emotions on Personal Space Preferences

Personal Space (PS) is regulated dynamically by choosing an appropriate interpersonal distance when navigating through social environments. This key element in social interactions is influenced by numerous social and personal characteristics, e.g., the nature of the relationship between the interaction partners and the other’s sex and age. Moreover, affective contexts and expressions of interaction partners influence PS preferences, evident, e.g., in larger distances to others in threatening situations or when confronted with angry-looking individuals. Given the prominent role of emotional expressions in our everyday social interactions, we investigate how emotions affect PS adaptions.

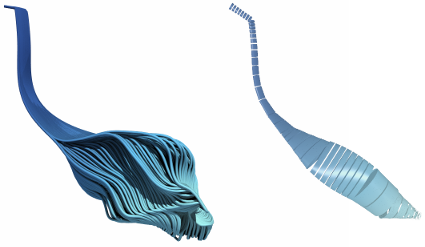

Interactive Exploration of Dissipation Element Geometry

Dissipation elements (DE) define a geometrical structure for the analysis of small-scale turbulence. Existing analyses based on DEs focus on a statistical treatment of large populations of DEs. In this paper, we propose a method for the interactive visualization of the geometrical shape of DE populations. We follow a two-step approach: in a pre-processing step, we approximate individual DEs by tube-like, implicit shapes with elliptical cross sections of varying radii; we then render these approximations by direct ray-casting thereby avoiding the need for costly generation of detailed, explicit geometry for rasterization. Our results demonstrate that the approximation gives a reasonable representation of DE geometries and the rendering performance is suitable for interactive use.

@InProceedings{Vierjahn2017,

booktitle = {Eurographics Symposium on Parallel Graphics and Visualization},

author = {Tom Vierjahn and Andrea Schnorr and Benjamin Weyers and Dominik Denker and Ingo Wald and Christoph Garth and Torsten W. Kuhlen and Bernd Hentschel},

title = {Interactive Exploration of Dissipation Element Geometry},

year = {2017},

pages = {53--62},

ISSN = {1727-348X},

ISBN = {978-3-03868-034-5},

doi = {10.2312/pgv.20171093},

}

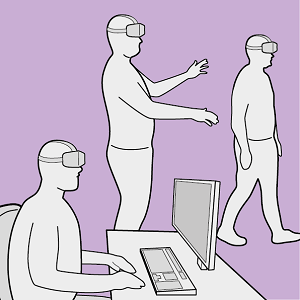

Utilizing Immersive Virtual Reality in Everyday Work

Applications of Virtual Reality (VR) have been repeatedly explored with the goal to improve the data analysis process of users from different application domains, such as architecture and simulation sciences. Unfortunately, making VR available in professional application scenarios or even using it on a regular basis has proven to be challenging. We argue that everyday usage environments, such as office spaces, have introduced constraints that critically affect the design of interaction concepts since well-established techniques might be difficult to use. In our opinion, it is crucial to understand the impact of usage scenarios on interaction design, to successfully develop VR applications for everyday use. To substantiate our claim, we define three distinct usage scenarios in this work that primarily differ in the amount of mobility they allow for. We outline each scenario's inherent constraints but also point out opportunities that may be used to design novel, well-suited interaction techniques for different everyday usage environments. In addition, we link each scenario to a concrete application example to clarify its relevance and show how it affects interaction design.

Remain Seated: Towards Fully-Immersive Desktop VR

In this work we describe the scenario of fully-immersive desktop VR, which serves the overall goal to seamlessly integrate with existing workflows and workplaces of data analysts and researchers, such that they can benefit from the gain in productivity when immersed in their data-spaces. Furthermore, we provide a literature review showing the status quo of techniques and methods available for realizing this scenario under the raised restrictions. Finally, we propose a concept of an analysis framework and the decisions made and the decisions still to be taken, to outline how the described scenario and the collected methods are feasible in a real use case.

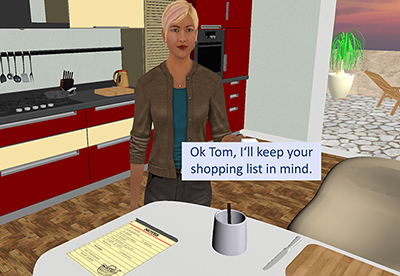

Evaluation of Approaching-Strategies of Temporarily Required Virtual Assistants in Immersive Environments

Embodied, virtual agents provide users assistance in agent-based support systems. To this end, two closely linked factors have to be considered for the agents’ behavioral design: their presence time (PT), i.e., the time in which the agents are visible, and the approaching time (AT), i.e., the time span between the user’s calling for an agent and the agent’s actual availability.

This work focuses on human-like assistants that are embedded in immersive scenes but that are required only temporarily. To the best of our knowledge, guidelines for a suitable trade-off between PT and AT of these assistants do not yet exist. We address this gap by presenting the results of a controlled within-subjects study in a CAVE. While keeping a low PT so that the agent is not perceived as annoying, three strategies affecting the AT, namely fading, walking, and running, are evaluated by 40 subjects. The results indicate no clear preference for either behavior. Instead, the necessity of a better trade-off between a low AT and an agent’s realistic behavior is demonstrated.

@InProceedings{Boensch2017b,

Title = {Evaluation of Approaching-Strategies of Temporarily Required Virtual Assistants in Immersive Environments},

Author = {Andrea B\"{o}nsch and Tom Vierjahn and Torsten W. Kuhlen},

Booktitle = {IEEE Symposium on 3D User Interfaces},

Year = {2017},

Pages = {69-72}

}

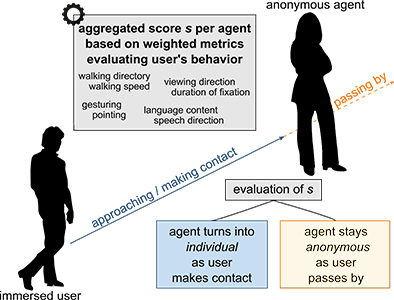

Turning Anonymous Members of a Multiagent System into Individuals

It is increasingly common to embed embodied, human-like, virtual agents into immersive virtual environments for either of the two use cases: (1) populating architectural scenes as anonymous members of a crowd and (2) meeting or supporting users as individual, intelligent and conversational agents. However, the new trend towards intelligent cyber physical systems inherently combines both use cases. Thus, we argue for the necessity of multiagent systems consisting of anonymous and autonomous agents, who temporarily turn into intelligent individuals. Besides purely enlivening the scene, each agent can thus be engaged into a situation-dependent interaction by the user, e.g., into a conversation or a joint task. To this end, we devise components for an agent’s behavioral design modeling the transition between an anonymous and an individual agent when a user approaches.

@InProceedings{Boensch2017c,

Title = {{Turning Anonymous Members of a Multiagent System into Individuals}},

Author = {Andrea B\"{o}nsch, Tom Vierjahn, Ari Shapiro and Torsten W. Kuhlen},

Booktitle = {IEEE Virtual Humans and Crowds for Immersive Environments},

Year = {2017},

Keywords = {Virtual Humans; Virtual Reality; Intelligent Agents; Mutliagent System},

DOI ={ 10.1109/VHCIE.2017.7935620}

Owner = {ab280112},

Timestamp = {2017.02.28}

}

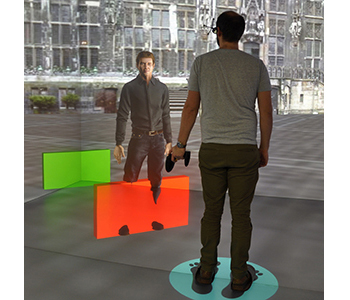

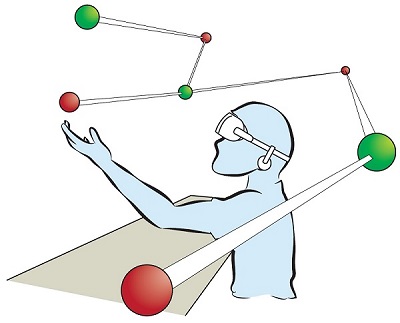

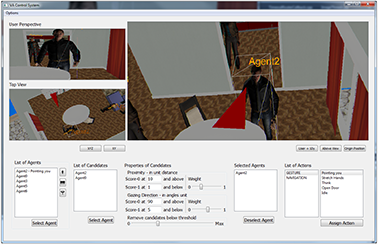

Poster: Score-Based Recommendation for Efficiently Selecting Individual Virtual Agents in Multi-Agent Systems

Controlling user-agent-interactions by means of an external operator includes selecting the virtual interaction partners fast and faultlessly. However, especially in immersive scenes with a large number of potential partners, this task is non-trivial.

Thus, we present a score-based recommendation system supporting an operator in the selection task. Agents are recommended as potential partners based on two parameters: the user’s distance to the agents and the user’s gazing direction. An additional graphical user interface (GUI) provides elements for configuring the system and for applying actions to those agents which the operator has confirmed as interaction partners.

@InProceedings{Boensch2017d,

Title = {Score-Based Recommendation for Efficiently Selecting Individual

Virtual Agents in Multi-Agent Systems},

Author = {Andrea Bönsch and Robert Trisnadi and Jonathan Wendt and Tom Vierjahn, and Torsten

W. Kuhlen},

Booktitle = {Proceedings of 23rd ACM

Symposium on Virtual Reality Software and Technology},

Year = {2017},

Pages = {tba},

DOI={10.1145/3139131.3141215}

}

Poster: Towards a Design Space Characterizing Workflows that Take Advantage of Immersive Visualization

Immersive visualization (IV) fosters the creation of mental images of a data set, a scene, a procedure, etc. We devise an initial version of a design space for categorizing workflows that take advantage of IV. From this categorization, specific requirements for seamlessly integrating IV can be derived. We validate the design space with three workflows emerging from our research projects.

@InProceedings{Vierjahn2017,

Title = {Towards a Design Space Characterizing Workflows that Take Advantage of Immersive Visualization},

Author = {Tom Vierjahn and Daniel Zielasko and Kees van Kooten and Peter Messmer and Bernd Hentschel and Torsten W. Kuhlen and Benjamin Weyers},

Booktitle = {IEEE Virtual Reality Conference Poster Proceedings},

Year = {2017},

Pages = {329-330},

DOI={10.1109/VR.2017.7892310}

}

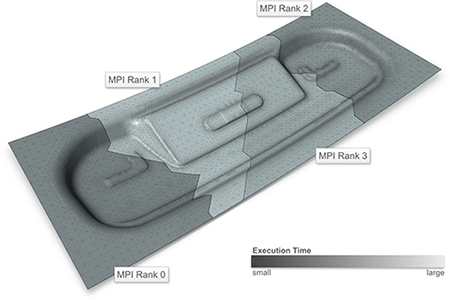

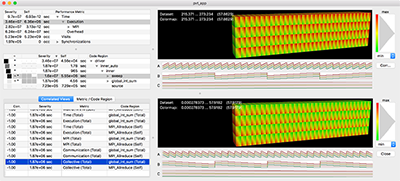

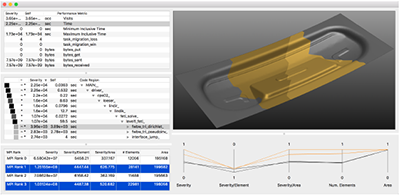

Visualizing Performance Data With Respect to the Simulated Geometry

Understanding the performance behaviour of high-performance computing (hpc) applications based on performance profiles is a challenging task. Phenomena in the performance behaviour can stem from the hpc system itself, from the application’s code, but also from the simulation domain. In order to analyse the latter phenomena, we propose a system that visualizes profile-based performance data in its spatial context in the simulation domain, i.e., on the geometry processed by the application. It thus helps hpc experts and simulation experts understand the performance data better. Furthermore, it reduces the initially large search space by automatically labeling those parts of the data that reveal variation in performance and thus require detailed analysis.

@inproceedings{VIERJAHN-2016-02,

Author = {Vierjahn, Tom and Kuhlen, Torsten W. and M\"{u}ller, Matthias S. and Hentschel, Bernd},

Booktitle = {JARA-HPC Symposium (accepted for publication)},

Title = {Visualizing Performance Data With Respect to the Simulated Geometry},

Year = {2016}}

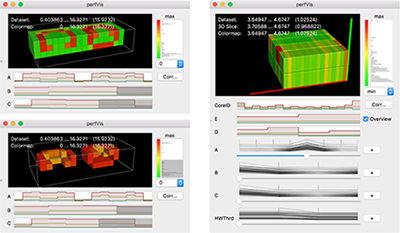

Using Directed Variance to Identify Meaningful Views in Call-Path Performance Profiles

Understanding the performance behaviour of massively parallel high-performance computing (HPC) applications based on call-path performance profiles is a time-consuming task. In this paper, we introduce the concept of directed variance in order to help analysts find performance bottlenecks in massive performance data and in the end optimize the application. According to HPC experts’ requirements, our technique automatically detects severe parts in the data that expose large variation in an application’s performance behaviour across system resources. Previously known variations are effectively filtered out. Analysts are thus guided through a reduced search space towards regions of interest for detailed examination in a 3D visualization. We demonstrate the effectiveness of our approach using performance data of common benchmark codes as well as from actively developed production codes.

@inproceedings{VIERJAHN-2016-04,

Author = {Vierjahn, Tom and Hermanns, Marc-Andr\'{e} and Mohr, Bernd and M\"{u}ller, Matthias S. and Kuhlen, Torsten W. and Hentschel, Bernd},

Booktitle = {3rd Workshop Visual Performance Analysis (to appear)},

Title = {Using Directed Variance to Identify Meaningful Views in Call-Path Performance Profiles},

Year = {2016}}

Poster: Correlating Sub-Phenomena in Performance Data in the Frequency Domain

Finding and understanding correlated performance behaviour of the individual functions of massively parallel high-performance computing (HPC) applications is a time-consuming task. In this poster, we propose filtered correlation analysis for automatically locating interdependencies in call-path performance profiles. Transforming the data into the frequency domain splits a performance phenomenon into sub-phenomena to be correlated separately. We provide the mathematical framework and an overview over the visualization, and we demonstrate the effectiveness of our technique.

Best Poster Award!

@inproceedings{Vierjahn-2016-03,

Author = {Vierjahn, Tom and Hermanns, Marc-Andr\'{e} and Mohr, Bernd and M\"{u}ller, Matthias S. and Kuhlen, Torsten W. and Hentschel, Bernd},

Booktitle = {LDAV 2016 -- Posters (accepted)},

Date-Added = {2016-08-31 22:14:47 +0000},

Date-Modified = {2016-08-31 22:15:58 +0000},

Title = {Correlating Sub-Phenomena in Performance Data in the Frequency Domain}

}

Poster: Evaluating Presence Strategies of Temporarily Required Virtual Assistants

Computer-controlled virtual humans can serve as assistants in virtual scenes. Here, they are usually in an almost constant contact with the user. Nonetheless, in some applications assistants are required only temporarily. Consequently, presenting them only when needed, i.e, minimizing their presence time, might be advisable.

To the best of our knowledge, there do not yet exist any design guidelines for such agent-based support systems. Thus, we plan to close this gap by a controlled qualitative and quantitative user study in a CAVE-like environment.We expect users to prefer assistants with a low presence time as well as a low fallback time to get quick support. However, as both factors are linked, a suitable trade-off needs to be found. Thus, we plan to test four different strategies, namely fading, moving, omnipresent and busy. This work presents our hypotheses and our planned within-subject design.

@InBook{Boensch2016c,

Title = {Evaluating Presence Strategies of Temporarily Required Virtual Assistants},

Author = {Andrea B\"{o}nsch and Tom Vierjahn and Torsten W. Kuhlen},

Pages = {387 - 391},

Publisher = {Springer International Publishing},

Year = {2016},

Month = {September},

Abstract = {Computer-controlled virtual humans can serve as assistants in virtual scenes. Here, they are usually in an almost constant contact with the user. Nonetheless, in some applications assistants are required only

temporarily. Consequently, presenting them only when needed, i.e., minimizing their presence time, might be advisable.

To the best of our knowledge, there do not yet exist any design guidelines for such agent-based support systems. Thus, we plan to close this gap by a controlled qualitative and quantitative user study in a CAVE-like environment. We expect users to prefer assistants with a low presence time as well as a low fallback time to get quick support. However, as both factors are linked, a suitable trade-off needs to be found. Thus, we p lan to test four different strategies, namely fading, moving, omnipresent and busy. This work presents our hypotheses and our planned within-subject design.},

Booktitle = {Intelligent Virtual Agents: 16th International Conference, IVA 2016. Proceedings},

Doi = {10.1007/978-3-319-47665-0_39},

Keywords = {Virtual agent, Assistive technology, Immersive virtual environments, User study design},

Owner = {ab280112},

Timestamp = {2016.08.01},

Url = {http://dx.doi.org/10.1007/978-3-319-47665-0_39}

}

Poster: Geometry-Aware Visualization of Performance Data

Phenomena in the performance behaviour of high-performance computing (HPC) applications can stem from the HPC system itself, from the application's code, but also from the simulation domain. In order to analyse the latter phenomena, we propose a system that visualizes profile-based performance data in its spatial context, i.e., on the geometry, in the simulation domain. It thus helps HPC experts but also simulation experts understand the performance data better. In addition, our tool reduces the initially large search space by automatically labelling large-variation views on the data which require detailed analysis.

@inproceedings {eurp.20161136,

booktitle = {EuroVis 2016 - Posters},

editor = {Tobias Isenberg and Filip Sadlo},

title = {{Geometry-Aware Visualization of Performance Data}},

author = {Vierjahn, Tom and Hentschel, Bernd and Kuhlen, Torsten W.},

year = {2016},

publisher = {The Eurographics Association},

ISBN = {978-3-03868-015-4},

DOI = {10.2312/eurp.20161136},

pages = {37--39}

}

Talk: Two Basic Aspects of Virtual Agents’ Behavior: Collision Avoidance and Presence Strategies

Virtual Agents (VAs) are embedded in virtual environments for two reasons: they enliven architectural scenes by representing more realistic situations, and they are dialogue partners. They can function as training partners such as representing students in a teaching scenario, or as assistants by, e.g., guiding users through a scene or by performing certain tasks either individually or in collaboration with the user. However, designing such VAs is challenging as various requirements have to be met. Two relevant factors will be briefly discussed in the talk: Collision Avoidance and Presence Strategies.

Surface-Reconstructing Growing Neural Gas: A Method for Online Construction of Textured Triangle Meshes

In this paper we propose surface-reconstructing growing neural gas (SGNG), a learning based artificial neural network that iteratively constructs a triangle mesh from a set of sample points lying on an object?s surface. From these input points SGNG automatically approximates the shape and the topology of the original surface. It furthermore assigns suitable textures to the triangles if images of the surface are available that are registered to the points.

By expressing topological neighborhood via triangles, and by learning visibility from the input data, SGNG constructs a triangle mesh entirely during online learning and does not need any post-processing to close untriangulated holes or to assign suitable textures without occlusion artifacts. Thus, SGNG is well suited for long-running applications that require an iterative pipeline where scanning, reconstruction and visualization are executed in parallel.

Results indicate that SGNG improves upon its predecessors and achieves similar or even better performance in terms of smaller reconstruction errors and better reconstruction quality than existing state-of-the-art reconstruction algorithms. If the input points are updated repeatedly during reconstruction, SGNG performs even faster than existing techniques.

Best Paper Award!